Growth Hacking Course

Why Your Marketing Keeps Failing (And It's Not Your Strategy)

Last updated: 2026-04-06

I keep hearing the same thing dressed up differently: we know what we should be doing, but we can't make it actually work.

The revenue leader reckons the strategy needs fixing. The marketing leader reckons they're doing the work but something's off. Both of them are looking at the strategy, and from what I'm seeing, that's almost never where the problem actually lives.

The market is full of folk selling better GTM playbooks. Tighter ICP definitions. More sophisticated demand gen frameworks. And organisations keep buying them, because the problem genuinely looks like a strategy problem from the outside.

But go into the actual system - the ad account, the CRM, the team's day-to-day workflow, the data infrastructure - and you almost always find something else entirely. Something that has nothing to do with the quality of the plan.

I've found five patterns that come up again and again. None of them are the strategy.

!

1. The marketer is trying. That's what makes it hard to see.

!

This is the most common pattern, and the slowest to diagnose. A revenue leader has a series of disappointing moments. The marketer said they'd do something and didn't, or they're oblivious to why a deadline matters, or the results keep coming in below expectation. But the marketer is clearly doing 'stuff.' Some of that stuff is doing 'something.' So there's not enough pain to force a change - until eventually there is.

Senior pattern recognition cannot be substituted by junior effort, no matter how hard they work. That's the core of this one. A junior or mid-level marketer can run campaigns, produce content, test variations - but they tend to optimise the variables directly in front of them rather than questioning whether those are the right variables to optimise at all. Someone with twenty years of experience walks in and changes the thing they didn't know to look at.

I worked with one company where the marketer had been at it for eight months - tweaking creative, testing different audiences on Meta, iterating on copy. Proper busy. But they were running variants on the wrong channel entirely, targeting audiences that weren't in market. I came in, shifted spend to Google Ads to reach people actively searching, changed the messaging around the value prop and the customer journey, and got them from zero to 500 enquiries a day in six weeks. The marketer was genuinely learning - but he didn't have the experience to see that the channel was the problem, not the creative.

What makes this pattern so hard to spot is that the output - the campaigns, the content, the reports - all looks like strategy work. So the revenue leader commissions a new strategy. New positioning. New messaging framework. None of it changes anything because the person executing it is still optimising the wrong variables. The actual gap never gets named, because naming it feels brutal.

2. The strategy was built for a team that doesn't exist yet.

!

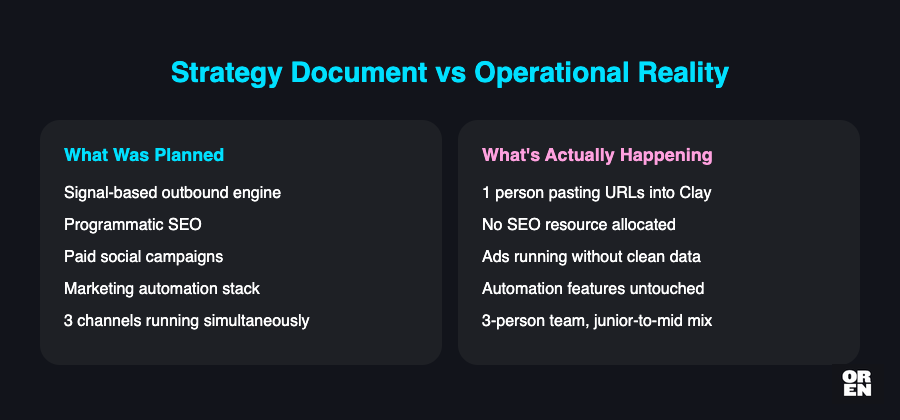

This one is related but distinct. The team isn't necessarily bad at what they do - there just aren't enough of them to cover what's been scoped.

A three-person team with a junior-to-mid skill mix cannot run signal-based outbound, programmatic SEO, and paid social simultaneously - and do any of them properly. But that's often exactly what gets planned. Everyone nods in the meeting. Nobody does the maths on whether the capacity exists. The gap doesn't show up in the planning document. It shows up three months later as slow progress and missed commitments.

The strategy calls for a proper outbound engine. The execution is one person doing manual data entry. From the outside, outbound is 'running.' The gap between what was planned and what's actually happening is enormous, and nobody's looking at the operational level closely enough to see it.

The activity exists, but the systems, tooling, and headcount needed to run it properly don't - and because nobody's comparing the strategy document against what's actually happening day-to-day, the gap quietly compounds. The board concludes the GTM motion isn't working and brings in a consultant to redesign it, when the motion itself was sound and simply lacked the people to run it.

The fix isn't always "hire more people." Sometimes it's narrowing the scope to what the team can genuinely execute with quality. But you can't make that call until you've looked at what's actually happening on the ground, not just what the plan says should be happening.

3. The marketing leader has a plan. The CEO doesn't believe in marketing.

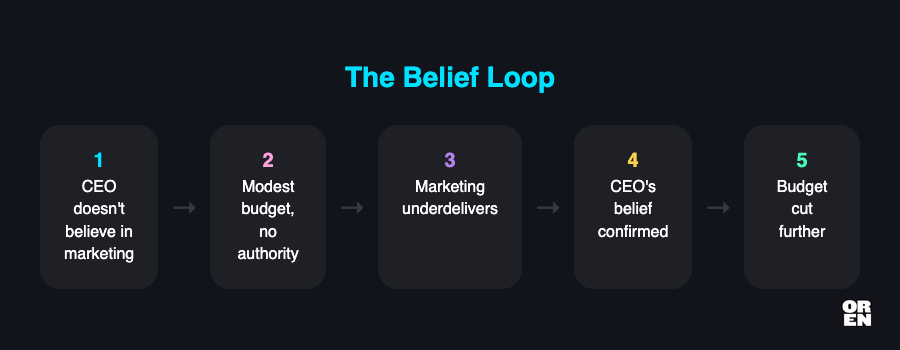

Sometimes the strategy is right, the team is capable, the execution is happening - and the results still don't come. Not because of anything in the marketing function. Because of what's happening above it.

The pattern looks like this: a marketing leader who has a budget, has a plan, has genuine capability - but is fighting for meeting time, chasing sign-offs, and watching alignment drag on for weeks. Every decision takes longer than it should. Every initiative needs another round of approval. From the outside it looks like slow execution. What it actually is: an organisation that doesn't believe marketing contributes meaningfully to revenue.

I had a contact describe it to me recently. The CEO keeps hiring more salespeople. The marketing budget is modest. The channels that are 'working' are partners and outbound sales - channels that exist completely independently of anything the marketing function does. Marketing is tolerated, not believed in.

The marketing leader knows this, by the way (at least the ones I've worked with do). They're not oblivious. But they think they can fix it with results, or they think belief will build over time, or they're just keeping the function alive until the political winds shift. From what I've seen, the capability is real - but the political read is wrong on all three counts.

!

No amount of better execution fixes a fundamental belief problem at leadership level. The organisation won't give marketing the resources, the authority, or the runway to actually succeed - and so marketing keeps underdelivering, which confirms the original belief that it doesn't work. It's a closed loop, and it's invisible because it looks exactly like a marketing execution problem from every angle.

The CEO looks at the pipeline numbers, concludes marketing isn't delivering, and cuts the budget further. Which confirms exactly what they already believed. Nobody is looking at whether the organisation was ever set up to let marketing succeed in the first place.

You can have the right strategy and the right team and still get nowhere if the belief isn't there above you. That's not a marketing problem - that's an organisational one. And it's genuinely one of the harder things to name, because naming it means questioning decisions that were made well above the marketing function.

4. The thing got built. Nobody's using it.

This one I've seen up close, and it's maddening.

Loads of time and real effort goes into building something genuinely good - a scoring model, a dashboard, a set of automations that would actually work - and then the team routes around it, doing things manually because that's what they know. The tool functions. The behaviour doesn't change, which means the results don't either.

What I've seen specifically: a sales team handed a predictive scoring model and proper data infrastructure, and their response was essentially that they'd ignore it and manually hack leads on LinkedIn instead. Not because the model was wrong. Because using it required them to change how they worked, and nobody had made that case, set that expectation, or built any accountability around it. The tool was real and functional - the adoption plan was the thing that was missing.

!

Building something is satisfying and visible - you can show it, demo it, stick it in a board update. Changing how a team actually works day-to-day is slow and invisible and genuinely difficult. So organisations fund the first thing and assume the second will follow.

The cost of this is invisible in a specific way: the organisation spent the money, so they reckon they've made the investment. The tool exists and works - but nobody's using it, which means the results are the same as if it had never been built. Eventually they'll conclude the tool was the wrong choice, when the real issue is that nobody planned for adoption as a separate workstream.

So leadership looks at the flat results and concludes the tool was a bad investment. They buy a different one, with the same outcome, because getting a team to genuinely change how they work day-to-day is a separate project from building the tool - and most organisations treat them as the same project.

5. The measurement is broken and everyone's making decisions on it anyway.

Technical, this one. And more common than anyone wants to admit.

Conversion tracking firing on the wrong events. Double-counting across platforms. A form collecting leads into a database nobody's checked since February. These aren't edge cases - I've seen all three running for weeks before anyone noticed.

One situation I saw: conversion tracking was broken for three consecutive weeks. Double-counting, wrong events firing, Chrome preview mode giving unreliable results. Three weeks of a company spending real money on ads with no reliable measurement. And the problem kept sliding because fixing it is unglamorous work - it's not a new campaign, it's not a new strategy, it's not anything you can put in a board update with a sense of momentum.

!

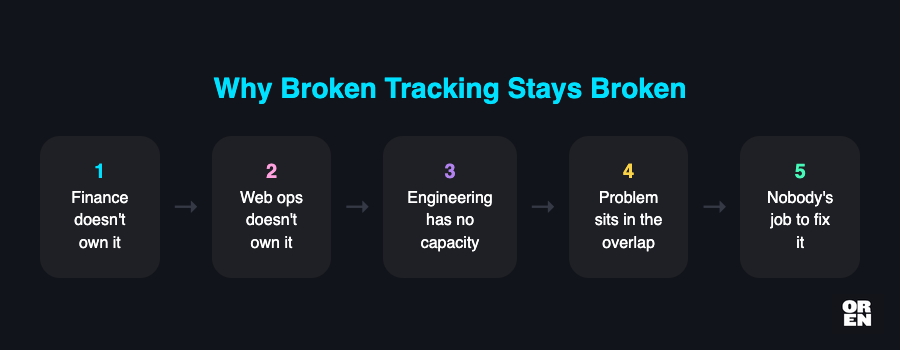

So it slides. And the reason it slides is often structural: the fix sits in the cross-functional gap between teams. I saw this recently with a company where tracking had been broken for months. Finance didn't own it. Web ops didn't own it. Engineering had capacity issues. The problem lived in the overlap between three teams, and because none of them fully owned it, it just sat there. That's the mechanism - it's not that nobody cares, it's that nobody's job description includes fixing it.

And while it slides, decisions get made on bad data. Channels get cut that were actually working. Budgets shift toward campaigns that look good in the dashboard but are benefiting from attribution errors. The team is optimising against the wrong signal.

The specific invisible quality of broken measurement is that it doesn't look broken. It looks like a working dashboard. The numbers are there. The graphs are moving. The reports get sent. Everyone in the meeting is looking at data and making decisions and feeling like they're running a data-driven marketing function - they're not. They're running a data-decorated one, which is a very different thing.

The CMO reviews the dashboard, sees paid isn't performing, and shifts budget to organic. Except paid was performing - the tracking was just broken. The strategy adapts to a reality that doesn't exist, and nobody questions whether the data underneath was ever trustworthy.

Why this keeps happening

Every one of these five patterns looks like a strategy problem from inside the organisation - but each one produces a different surface symptom that sends leadership down the wrong path. A capability gap gets misread as a strategy that needs refining. A capacity problem presents as slow execution. Political dynamics masquerade as marketing underperformance. Unused tools get written off as bad investments. And broken measurement makes campaigns look like they're failing when the data itself is the thing that's wrong. Completely different underlying causes, all producing the same conclusion: the strategy needs more work.

That's what makes this frustrating to watch. Organisations keep hiring strategy consultants and refining their GTM playbooks because that's what the problem looks like. And every time the strategy improves and the results don't follow, it confirms the belief that the strategy just needs more work. It's a loop that can run for years.

Breaking the loop requires someone to go into the actual system and find where the operational or technical reality has diverged from what everyone assumed was happening - the ad account, the CRM, the team's day-to-day workflow, the data infrastructure, the org chart and who actually has authority to make decisions. That diagnostic step is the thing most organisations skip - not because they don't care, but because it requires someone who understands marketing well enough to know what good looks like, and understands the operational and technical layer well enough to see where it's broken. Those two things don't often live in the same person.

If your marketing isn't working and the strategy looks fine, the strategy is probably fine. The place to look is the gap between the plan and the result - what's actually happening in the system between those two points.

Frequently Asked Questions

Why do marketing problems get misdiagnosed as strategy problems?

The visible outputs of marketing work - campaigns, content, reports - all look like strategy work, making it easy to assume the strategy needs fixing. But the real issues typically live in the execution layer: skill gaps, capacity constraints, broken tracking, or lack of leadership buy-in.

Can a junior marketer compensate for lack of experience by working harder?

No - senior pattern recognition cannot be substituted by junior effort, no matter how hard they work. Junior and mid-level marketers tend to optimise the variables directly in front of them rather than questioning whether those are the right variables to optimise at all.

What happens when a CEO doesn't believe marketing contributes to revenue?

Marketing gets insufficient resources, authority, and runway to succeed, causing it to underdeliver - which confirms the original belief that it doesn't work. This creates a closed loop that looks exactly like a marketing execution problem from every angle, making it very difficult to diagnose.

Why do teams fail to adopt tools and systems that have been built for them?

Building a tool is visible and satisfying, so organisations fund it and assume behaviour change will follow automatically. But getting a team to change how they work day-to-day is a separate project that requires its own planning, expectation-setting, and accountability - and most organisations treat the two as the same project.

How does broken conversion tracking damage marketing decisions?

Broken tracking produces a dashboard that looks functional - numbers are present, graphs are moving - so decisions get made against bad data without anyone realising it. Channels that were actually working get cut, and budgets shift toward campaigns that only appear to perform due to attribution errors.

Oren Greenberg

GTM Engineering Advisor · 21+ years in growth & GTM

Oren advises B2B SaaS companies on go-to-market engineering, growth strategy, and AI-driven marketing.

Connect on LinkedIn