Growth Hacking Course

When a Growth Audit Finds the Problem You Weren't Looking For

Last updated: 2026-04-06

Let's start with something most CEOs don't want to hear.

Your growth engine isn't underperforming because your marketing team lacks skill. It's underperforming because you're solving problems in the wrong order.

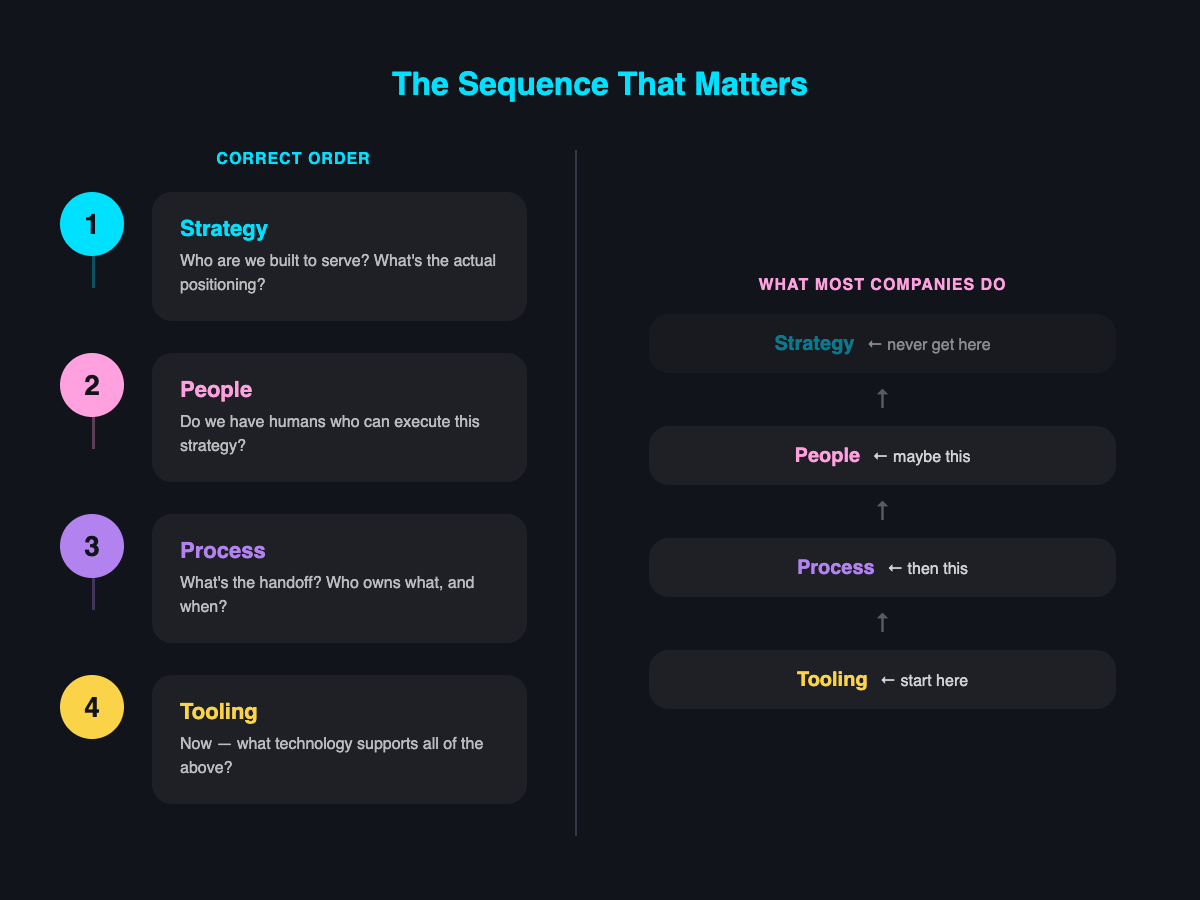

Strategy → People → Process → Tooling

This sequence matters more than anyone admits.

Most companies do it backwards. They buy Salesforce, hire a RevOps person to implement it, then 6 months later realise they never defined what a qualified lead actually means. So sales and marketing are still fighting about the same 73% of leads that never get contacted (because nobody agrees they're worth contacting).

Strategy first means answering: who are we actually built to serve? Not who we could serve. Not who our competitor serves. Who converts fastest, stays longest, and expands most predictably?

People second means: do we have humans who can execute this strategy, or are we asking a demand gen specialist to do growth engineering? (These are not the same role, despite what LinkedIn says.)

Process third means: what's the actual handoff mechanism between marketing and sales? What happens when a lead converts? Who owns it, when, and what's the SLA?

Tooling last means: now that we know what we're doing and who's doing it and how it flows, what technology supports that?

!

Getting the sequence wrong is quite material. It pertains to the broader marketing framework too - you look at the landscape first, then you do the segmentation, only then the messaging, only then the value prop. Skip a step or do them out of order and everything downstream is built on the wrong assumptions. Most of the strategies I see get the sequence wrong and allocate resources incorrectly across those buckets.

The Overlap Problem

The points of maximum breakage in any growth engine are where functions overlap.

I asked a team recently how aligned sales and marketing are, scale of 1 to 10. Scores ranged from 6 to 9.

That's not an alignment problem. That's a perception problem. And perception gaps create process gaps.

The pattern: the more departments touching something, the higher the probability it breaks. A process involving 5 to 12 departments is exponentially more likely to fail than one involving 2.

Proximity matters too. Sales and marketing speak the same language (mostly). Engineering and sales don't. The further apart the functions, the more translation overhead, the more opportunities for meaning to get lost.

Most companies try to solve this with more meetings. That's not the fix. The fix is reducing overlap - which means deciding who actually owns what, then ruthlessly eliminating shared accountability. Shared accountability is just accountability theatre.

There's also the underutilisation problem that sits underneath all of this. Someone said something interesting to me recently - they said they wished they could pause for 6 months just to implement the tech stack they've already got properly. But they can't, because everything's changing too quickly. There's an awareness of chronic inefficiency. And it's not just one company. Everyone I work with, in every business, they all know it. That's their day-to-day - battling to fix problems they can see but don't have the bandwidth to address.

The variables that make each company's engine unique are genuinely complex. Their industry, their target audience, their business DNA, their ACV, how differentiated they are, their tech stack, their processes, and the team - in terms of competence, resource availability, and efficiency. It's like an engine that's also a basketball game (and we probably need a better metaphor). There are components of an assembly line, but it's a business, so there's an organic component because it's people. Highly dynamic and adaptable. But constrained by static infrastructure - APIs, the handful of CRMs that are actually good, integration limitations that plague every company regardless of size.

The point is you can't diagnose this with a template. Every engine is different, and different parts are broken or malformed for different businesses.

The ICP Work That Surfaced Something Bigger

A services company brought me in to build Ideal Customer Profiles. Multiple service lines, a clear strategic target to shift their revenue mix toward growth segments.

Standard ICP work. Enrich the CRM data with external signals, run the analysis, identify which variables predict winning.

The initial profiles looked solid. But when we stress-tested the methodology - rebuilt at company level instead of deal level, clustered the correlated variables, controlled for the things that needed controlling - the picture changed completely.

Most of what looked like independent signals were proxies for the same thing: is this a large, regulated company? The ICP was really measuring company size wearing a disguise.

But the real insight wasn't in the ICP factors at all.

It was strategic. There's implied characteristics that can't be detectable publicly, but you can see them when you put a few variables together. And what those variables showed was that one part of the business was structurally different from the rest. Different buyer profile. Different sales cycle. No cross-sell synergy with anything else.

The company thought they had a targeting problem. The data said they had a portfolio coherence problem. And that kind of problem creates drag across the entire go-to-market engine - sales pulling in 2 directions, marketing unable to build coherent messaging, pipeline friction that looks operational but is actually strategic.

Even though you're thinking "we just need to optimise the ads," the reality is the mistake was done at a much higher level.

This is the bit that surprises people. The ICP brief was narrowly scoped - tell us who to target. But when you actually stress-test the data, the findings almost never stay inside the brief. In this case, the targeting question was unanswerable until the portfolio question was resolved. You can't build a coherent ICP for a business that's trying to serve fundamentally incompatible buyer profiles from the same go-to-market engine.

The hidden layers in most GTM systems mean the symptoms show up in one place and the cause lives somewhere else entirely.

There were useful ICP findings too, once the portfolio noise was stripped out. Different service lines had genuinely different - sometimes opposite - buyer profiles. One converted best with complex, mature tech stacks. Another converted best with simpler ones (they were buying help to build the infrastructure, not test it). The strongest disqualifying signal across the board was leadership transition - an interim CTO in post more than halved the probability of winning. Procurement freezes when the decision-maker is temporary.

None of that is visible from inside a CRM. It only appears through enrichment - and only holds up once you've controlled for the variables that are really just measuring the same underlying dimension. Most ICPs don't do either.

What a Growth Audit Actually Does

Everyone I work with in every business, they all know there are chronic inefficiencies. That's their day-to-day - battling to fix the problems.

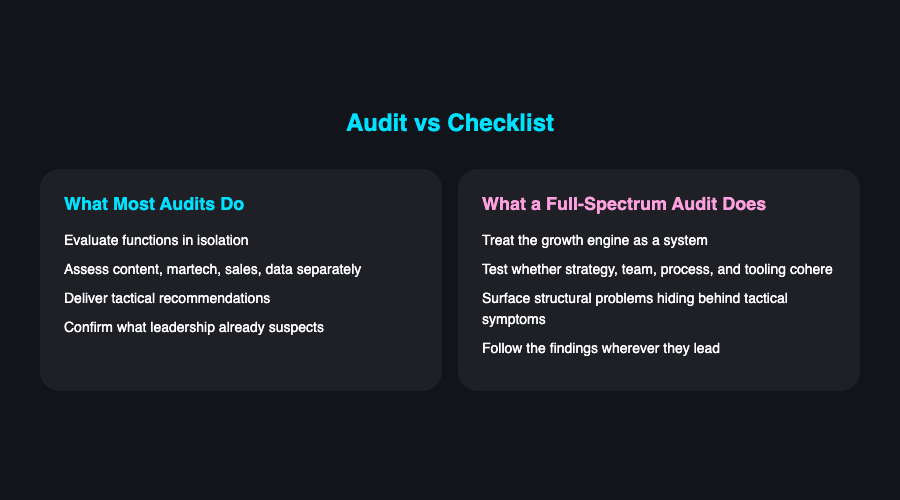

Most growth audits evaluate functions in isolation. They'll assess your content strategy, your martech stack, your sales process, your data quality - separately. Then they'll give you a list of tactical recommendations.

That's not an audit. That's a checklist.

!

A full-spectrum audit treats the growth engine as a system. It asks whether your strategy is coherent, whether your team is structured to execute it, whether your process supports the handoffs, and whether your tooling enables the process.

It comes down to foundational principles. How differentiated is the offer? Are you attracting the right customer for that offer? What does your sales process look like for converting them? Are you managing to retain them? How defensible is your moat? And very importantly - the feedback loop. How are you accelerating learning?

Most companies can answer the first 2. Almost none have a clear answer for the last one. And the feedback loop is where the compounding advantage lives - the companies that learn fastest about what's working and what isn't are the ones that pull away.

The irony is that most leadership teams already suspect something is structurally wrong. They can feel it. But the audit that tells them exactly where - and forces them to confront the uncomfortable answer - is the one they keep deferring.

And that brings it back to the sequence. Strategy, people, process, tooling - in that order. Most audits start at tooling and work backwards, which is why they end up producing recommendations that feel right but don't move the needle. You can't target your way out of a positioning problem. You can't process your way out of a skills mismatch. And you definitely can't optimise your way out of a portfolio that doesn't make sense.

The question isn't whether your growth function needs an audit. It's whether you're willing to start with strategy instead of tooling. And whether you're prepared for the findings to outgrow the brief.

Frequently Asked Questions

Why do most companies' growth engines underperform?

Most companies solve problems in the wrong order, typically starting with tooling and working backwards instead of beginning with strategy. They buy technology and hire people before defining fundamental things like what a qualified lead actually means, so downstream processes are built on flawed assumptions.

What is the correct sequence for building a growth engine?

The correct order is strategy first, then people, then process, then tooling. Strategy defines who you're built to serve, people ensures you have the right roles to execute, process establishes handoffs and ownership, and only then should technology be selected to support everything upstream.

Why do growth problems so often appear in one place but originate somewhere else?

The points of maximum breakage occur where functions overlap, and the more departments involved in a process, the higher the probability of failure. Symptoms like pipeline friction or misaligned messaging often look operational but are actually caused by strategic issues such as an incoherent portfolio or incompatible buyer profiles.

What makes a growth audit different from a standard checklist review?

A genuine growth audit treats the entire growth engine as a system rather than evaluating functions in isolation. It assesses whether strategy, team structure, process, and tooling are coherent and mutually reinforcing, rather than producing a list of disconnected tactical recommendations.

Why do most ICPs fail to produce accurate targeting insights?

Most ICPs are built at the deal level without controlling for variables that are actually measuring the same underlying dimension, such as company size masquerading as multiple independent signals. They also rarely incorporate external enrichment data, which means signals like leadership transitions that strongly predict win or loss rates remain invisible.

Oren Greenberg

GTM Engineering Advisor · 21+ years in growth & GTM

Oren advises B2B SaaS companies on go-to-market engineering, growth strategy, and AI-driven marketing.

Connect on LinkedIn