Growth Hacking Course

Everyone's Collecting AI Skills. That's the Problem.

Last updated: 2026-04-06

Your AI skills library is the wrong answer to the right question.

Take a concrete example. A skill built to pull a LinkedIn post draft into Kit for publishing - formatted, tagged, ready to go. It works perfectly. Then someone on the team switches to Substack. The skill breaks immediately. Not because it was poorly built, but because it was doing exactly what it was designed to do: execute a specific sequence of steps inside a specific tech stack. Move one component, the whole thing falls apart.

That's not a bug.

That's the nature of the thing. The problem isn't the skill. The problem is what people think skills are.

Skills are SOPs, not Swiss army knives

So what is a skill, actually?

A skill is essentially a prompt.

A well-structured one, often with conditional logic and defined inputs and outputs.

But a prompt. That makes it closer to a standard operating procedure than a transferable capability.

SOPs are hardcoded to context. They work inside the system they were designed for. Lift them out and they stop working.

Most skills are contextually specific to your tech stack and workflow.

They're not portable assets. They're documented processes for a particular configuration of tools, voice, and sequence. The LinkedIn-to-Kit publishing skill isn't a general 'social-to-email' capability. It's an instruction set for 1 specific journey.

There should actually be very few general-purpose skills - which goes entirely against the trend in the market right now.

Some skills are like gravity - broadly applicable, working across many configurations. Most are like niche physical laws that only operate under very specific conditions. The market is treating every skill like gravity. Almost none of them are.

When AI selects which skill to trigger, it's making a probabilistic match. Similar-sounding skills cause misfires. If you don't understand what a skill actually does at the prompt level, you can't diagnose why the output is wrong. A large library of poorly understood skills creates more diagnostic complexity than it removes.

The recruiter getting it wrong

Why does this matter for hiring?

Hiring managers - including at the CMO level - are asking candidates to bring pre-built skills libraries as proof of AI capability.

The reasoning seems logical: someone with a large, well-organised library has done the work. They've operationalised AI. They're ahead of the curve.

They're looking at the wrong thing.

Think about it differently.

It's like asking a chef to bring their recipe collection to the interview. The recipes are free. What matters is whether they can read the kitchen - understand the equipment, adapt to what's in the pantry, design a menu that works for the specific service. A chef with 1,000 recipes who can't adapt to an unfamiliar kitchen is less useful than one with 50 who can work with anything.

Skills are recipes.

Replicable, contextually specific, and largely non-transferable. Treating them as the primary signal of AI capability mistakes the artefact for the judgment.

Efficiency without direction

The deeper problem with the skills-library arms race is that it optimises for the wrong outcome.

Early AI adopters are measurably more efficient. Tasks take less time. Volume increases. Outputs multiply. But being more efficient doesn't necessarily mean you're more effective.

I was working with a client's growth function when this became concrete.

The audit identified real inefficiencies in execution - places where automation could reduce friction and save time.

But the bottleneck wasn't efficiency.

There was a much more complicated mix underneath - commercial experience, confidence, culture, team dynamics. None of that shows up in an execution audit.

Adding automations to that situation would have produced more output of the wrong kind, faster.

The actual constraints were strategic and human: unclear commercial judgment, a team culture not aligned around the right priorities, confidence gaps affecting how decisions were made and communicated. No skill fixes that. No library of automations addresses it.

This is the same pattern that shows up in GTM diagnostics more broadly - symptoms that look like execution problems turn out to be skills mismatches, broken strategic foundations, or structural issues that tactical fixes actively make worse.

What orchestration actually is

What does good orchestration actually look like?

Orchestration is not having more tools.

It's knowing which tools to deploy, when, and how they connect to actual business outcomes. It's the architectural judgment to design systems that account for dependencies, edge cases, and the human decisions that have to happen between automated steps.

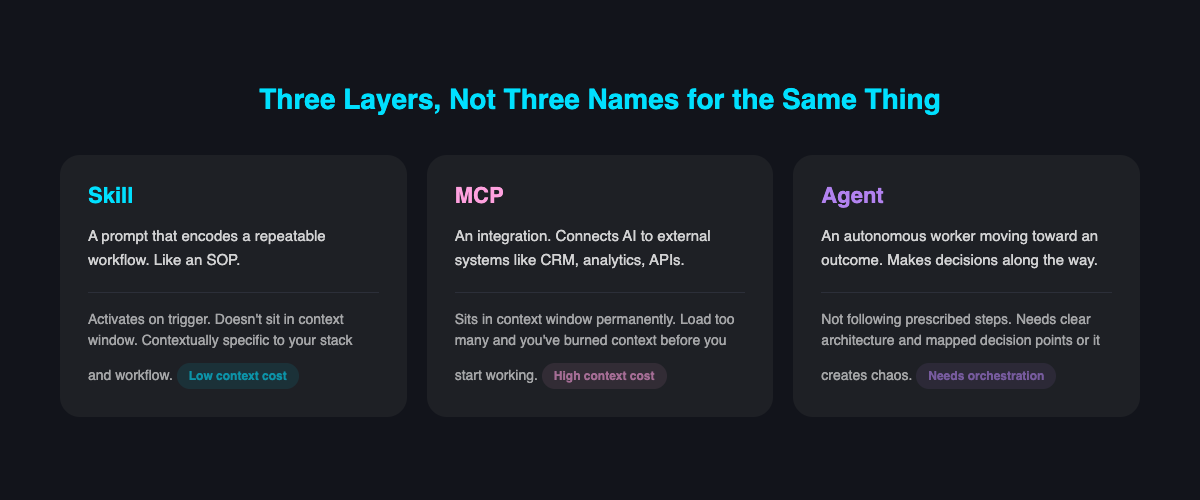

3 distinct layers of AI architecture. Conflating them causes bad decisions.

An MCP (Model Context Protocol) is an integration layer - it lives in the context window and consumes context with every call. Use it sparingly.

A skill is a prompt. Triggered, no context overhead, which means you can have more of them without the same cost.

An agent is an autonomous worker oriented toward an outcome, capable of making sequential decisions to get there.

!

These are not interchangeable.

Treating them as variations of the same thing leads to architectures that are either wasteful, brittle, or both.

Good orchestration of agents means designing for clear role boundaries.

A content production system works well when researcher, writer, editor, and brief creator operate as separate agents in a structured sequence.

Each has a defined input, a defined output, and a clear handoff.

The system works because the roles don't bleed into each other. And the architecture is interrogated before it runs - surface the edge cases, the ambiguous inputs, the points where human judgment needs to enter, before the first task executes.

When that judgment is applied well, the compounding effect is real - faster time to insight, faster time to execution, better outputs than competitors running bloated libraries.

Not because of the tools. Because of the architecture.

!

This connects directly to what separates companies that move up the AI maturity curve from those that stay stuck at the prompt engineering stage - the progression isn't about accumulating more tools, it's about building more sophisticated systems.

The hiring signal that actually works

If skills libraries are the wrong signal, what's the right one?

Ask candidates to walk you through a system they built. Not the tools they used - the system. The problem it was designed to solve. The architecture they chose and why. The dependencies they had to account for.

What broke. What that revealed.

The more intricate and complicated that engine, and the ability to coherently articulate the process of creating it - the learning process, what mistakes were observed, what gaps were identified - the more capable that person is.

Sophistication shows up in the complexity someone can coherently navigate and describe.

A candidate who can explain why they made a specific architectural decision, what broke, and how they diagnosed it is demonstrating something a skills library can never show: the judgment to design systems, not just populate them.

This is the same principle that applies to GTM architecture more broadly - the competitive advantage isn't in the tools, it's in the architectural thinking that determines how they fit together. Companies building composable systems outperform companies buying integrated suites precisely because they have folk who can design, not just operate.

The right question

The AI skills marketplace is asking: how many skills do you have?

Wrong question.

The right one: how well can you design systems that produce outcomes?

Skills are the output of orchestration, not the input to it. Building a library without the architectural judgment to know what to build, when, and how it connects to business outcomes is efficient activity without strategic direction.

The competitive advantage - now and as AI systems become more capable - lies in the orchestration of progressively more intricate and complex engines.

That's what scales. And it's what's worth hiring for.

Frequently Asked Questions

What is an AI skill, really?

An AI skill is essentially a well-structured prompt, often with conditional logic and defined inputs and outputs. This makes it closer to a standard operating procedure than a transferable capability - it works inside the system it was designed for, but breaks when moved to a different context or tech stack.

Why is a large AI skills library a poor signal of AI capability in hiring?

Skills are contextually specific and largely non-transferable, like recipes tied to a particular kitchen setup. What matters is whether someone can read a new environment, adapt, and design systems that work - judgment that a pre-built library cannot demonstrate.

What is the difference between an MCP, a skill, and an agent?

An MCP is an integration layer that lives in the context window and consumes context with every call. A skill is a triggered prompt with no context overhead. An agent is an autonomous worker oriented toward an outcome, capable of making sequential decisions to get there - and treating these as interchangeable leads to architectures that are wasteful or brittle.

Can adding more AI automations fix strategic or cultural problems in a team?

No - adding automations to a team with unclear commercial judgment, misaligned culture, or confidence gaps will produce more output of the wrong kind, faster. The actual constraints in such cases are strategic and human, and no skill or automation library addresses them.

What should hiring managers look for instead of a skills library?

Hiring managers should ask candidates to walk through a system they built - the problem it solved, the architecture chosen and why, the dependencies accounted for, and what broke and what that revealed. The ability to coherently articulate that design and diagnostic process demonstrates the judgment to build systems, not just populate them.

Oren Greenberg

GTM Engineering Advisor · 21+ years in growth & GTM

Oren advises B2B SaaS companies on go-to-market engineering, growth strategy, and AI-driven marketing.

Connect on LinkedIn